There is a gap between how AI is described and how it is used.

Most descriptions focus on capability. Models are evaluated on what they can produce. Benchmarks measure performance across tasks. New releases are framed in terms of improvement.

Inside organisations, the conversation is different.

It is less about what a system can do, and more about what it can be trusted to do repeatedly.

The Demo-to-Deployment Gap

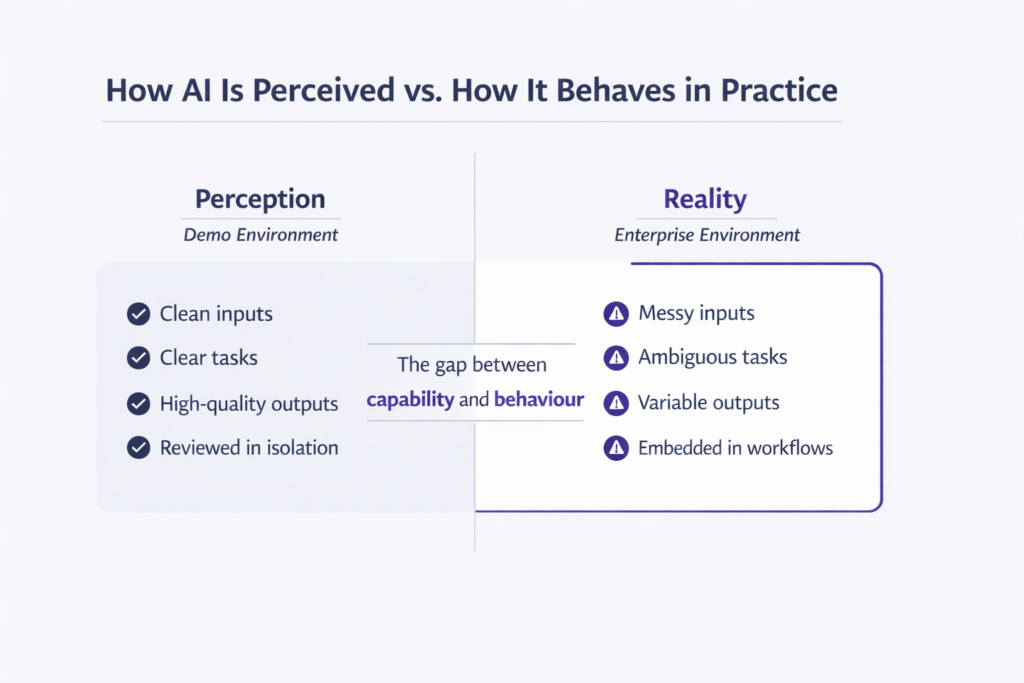

When enterprises first engage with AI systems, expectations are often shaped by polished demonstrations.

A model can summarise a document. It can generate a report. It can answer a question fluently.

These interactions are real – but they are also curated. They take place in conditions where the input is well-formed, the task is clearly defined, and the output is reviewed in isolation.

This is not how most systems are used in practice.

When the Same Model Meets Real Work

Inside an organisation, the same system meets different conditions. Inputs are messier. Tasks are less clearly bounded. Outputs are not reviewed individually but become part of ongoing workflows.

Small inconsistencies begin to matter. A slight change in phrasing alters an outcome. An edge case produces an unexpected result. An output that is mostly correct can still be unusable.

These are not failures in the conventional sense. They are properties of how the system behaves under variation.

Why Inconsistency Costs More Than Inaccuracy

In many enterprise contexts, inconsistency is more problematic than outright inaccuracy. An inaccurate result can be detected and corrected. An inconsistent system is harder to reason about. It introduces uncertainty into workflows that depend on predictable behaviour.

Over time, organisations respond predictably:

- Additional checks are introduced

- Outputs are reviewed more frequently

- Human intervention increases

What begins as a capability advantage becomes an operational constraint. In practice, this is why so many AI initiatives stall.

The New Priority: Reliability Over Raw Power

As a result, organisations shift their focus. The goal is no longer to maximise capability. It is to reduce uncertainty.

This changes what is valued. Instead of asking whether a system can perform a task, leaders begin to ask:

- Under what conditions does it perform reliably?

- How does it behave when those conditions change?

- How quickly can deviations be detected?

These are not questions about intelligence. They are questions about behaviour.

What Enterprises Can Safely Ignore (For Now)

This also clarifies what is less important, at least in the near term. Enterprises do not necessarily need systems that:

- Can handle every possible task

- Operate across arbitrary domains

- Maximise performance on general benchmarks

These capabilities dominate external narratives. But in practice, they are secondary to systems that behave consistently within defined boundaries.

Real Progress Happens in the Details

Progress does not primarily come from expanding what systems can do. It comes from narrowing how they behave – constraining inputs, defining use cases more precisely, and reducing variation in outputs.

This work is less visible than headline model improvements. But it is what allows systems to be integrated into real processes.

Embracing – Not Eliminating – AI Uncertainty

Organisations do not eliminate uncertainty. They learn to work with it.

Systems are introduced gradually. Their behaviour is observed under different conditions. Limits are identified, and use is shaped accordingly.

This is not a one-off activity. It is an ongoing process of adjustment.

The Missing Layer: Controlled Observation Environments

This process depends on where and how systems are observed. In uncontrolled settings, behaviour may be visible but difficult to interpret. In tightly controlled production systems, observation may be limited by risk.

Organisations therefore need environments where systems can be exposed to realistic conditions without introducing unintended consequences – not to demonstrate capability, but to understand behaviour.

Sandboxing Reimagined: From Playground to Observation Lab

In this context, sandboxing is often described as a place to experiment. A more useful framing is that it is a space for observation.

It allows organisations to:

- Expose systems to variation

- See how they respond

- Identify patterns in behaviour

- Build confidence in specific use cases

This does not eliminate uncertainty. But it changes how uncertainty is encountered. It becomes something that can be examined, rather than avoided.

The Real Competitive Edge: Mastering System Behavior

As AI systems continue to improve, access to raw capability will become less of a differentiator. What will matter is how organisations work with these systems over time.

Some will continue to treat outputs as isolated results. Others will develop a deeper understanding of how systems behave within their context.

This difference is subtle. But it determines whether AI remains experimental or becomes operational.

The Rise of Behavior-Focused Tooling

As this pattern becomes clearer, a different class of tooling is emerging – not focused on generating outputs, but on helping organisations observe, test, and understand them.

Platforms such as NayaOne sit squarely in this space, providing secure, controlled environments where AI systems can be explored under realistic conditions using synthetic data and enterprise-grade infrastructure.

The objective is not to increase capability. It is to make behaviour understandable.

What Enterprises Need from AI (and What They Don’t)

The question is not whether AI systems will become more capable. They will.

The question is whether organisations will become better at understanding how those systems behave.

Because in practice, what matters is not what a system can do once. It is what it can be relied on to do repeatedly, under conditions that are not fully controlled.

And that is a different kind of problem.

→ Learn how NayaOne enables structured, evidence-based technology evaluation